Paul-Edouard Sarlin*1 ,

Mihai Dusmanu*1

Johannes L. Schönberger2 ,

Pablo Speciale2 ,

Lukas Gruber2 ,

Viktor Larsson2 ,

Ondrej Miksik2 ,

Marc Pollefeys1,2

1ETH Zurich |

2Microsoft |

European Conference on Computer Vision 2022

Paper |

Dataset |

Code |

Leaderboard |

Poster |

News

- October 2022: Initial release of the evaluation data for benchmarking localization and mapping.

- October 2023: Release of the full raw data, which includes lidar point clouds, on-device depth maps, IMU, etc. The data can be access through the same process.

Abstract

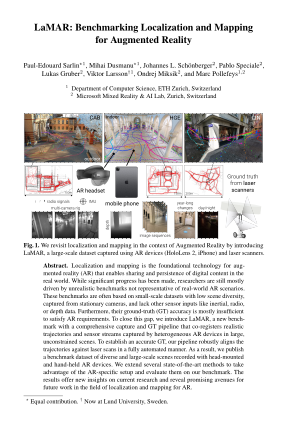

Localization and mapping is the foundational technology for augmented reality (AR) that enables sharing and persistence of digital content in the real world. While significant progress has been made, researchers are still mostly driven by unrealistic benchmarks not representative of real-world AR scenarios. These benchmarks are often based on small-scale datasets with low scene diversity, captured from stationary cameras, and lack other sensor inputs like inertial, radio, or depth data. Furthermore, their ground-truth (GT) accuracy is mostly insufficient to satisfy AR requirements. To close this gap, we introduce LaMAR, a new benchmark with a comprehensive capture and GT pipeline that co-registers realistic trajectories and sensor streams captured by heterogeneous AR devices in large, unconstrained scenes. To establish an accurate GT, our pipeline robustly aligns the trajectories against laser scans in a fully automated manner. As a result, we publish a benchmark dataset of diverse and large-scale scenes recorded with head-mounted and hand-held AR devices. We extend several state-of-the-art methods to take advantage of the AR-specific setup and evaluate them on our benchmark. The results offer new insights on current research and reveal promising avenues for future work in the field of localization and mapping for AR.

BibTeX Citation

@InProceedings{sarlin2022lamar,

author = {Paul-Edouard Sarlin and

Mihai Dusmanu and

Johannes L. Sch\"onberger and

Pablo Speciale and

Lukas Gruber and

Viktor Larsson and

Ondrej Miksik and

Marc Pollefeys},

title = "{LaMAR: Benchmarking Localization and Mapping for Augmented Reality}",

booktitle = "ECCV",

year = "2022",

}

We thank brighter AI for supporting us in anonymizing all images captured for LaMAR.